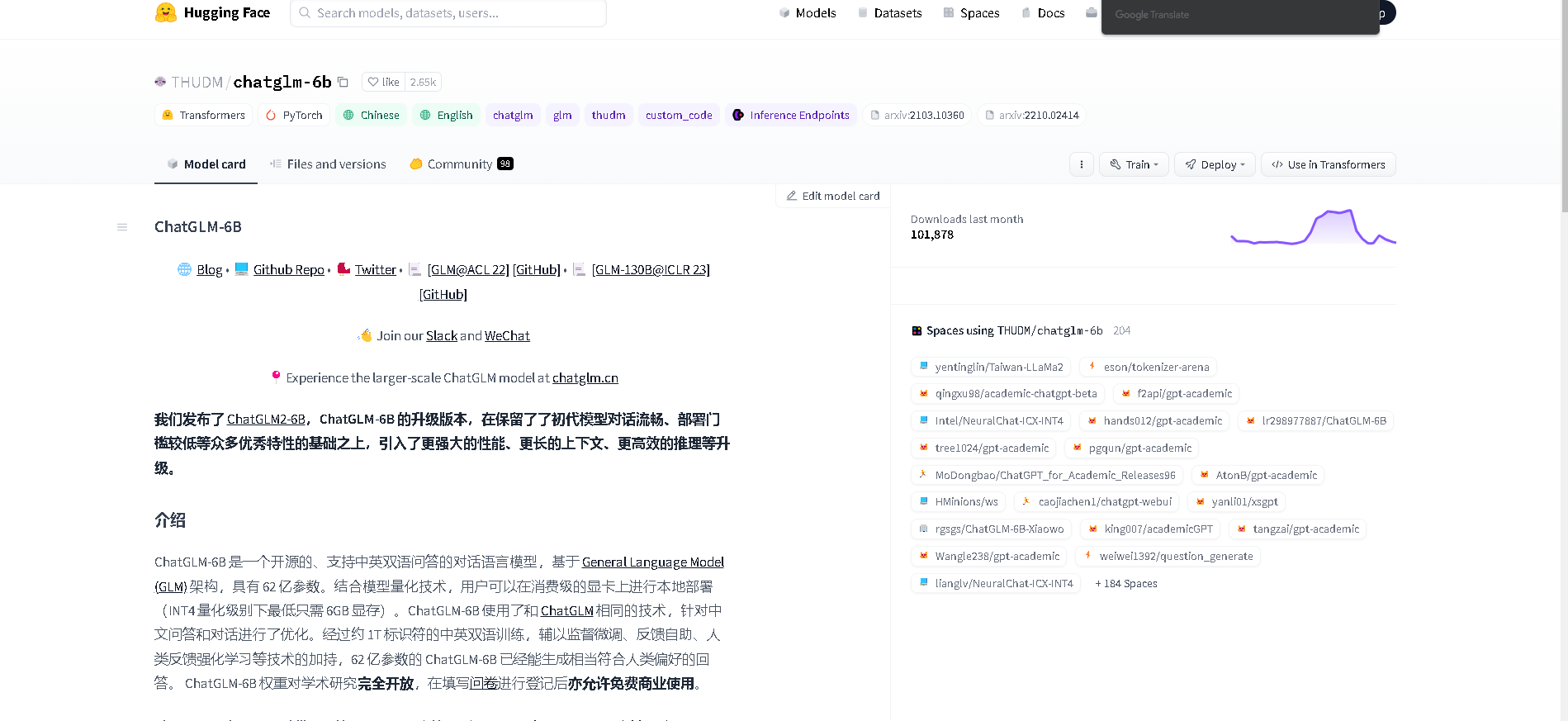

ChatGLM-6B emerges as a cutting-edge bilingual conversational language model, boasting a robust 6.2 billion parameters and built on the General Language Model (GLM) architecture. This model, adept at both Chinese and English, is fine-tuned for dialogue and question-answering tasks, ensuring smooth and contextually relevant interactions. Its training on a massive corpus of approximately 1 trillion tokens, coupled with advanced techniques like supervised fine-tuning, feedback bootstrap, and reinforcement learning with human feedback, has honed its ability to generate responses that align closely with human preferences. Remarkably user-friendly, ChatGLM-6B can be deployed locally on consumer-grade GPUs, requiring a mere 6GB of memory at INT4 quantization level, making it accessible for a wide range of applications. Open for academic research and free for commercial use upon completion of a questionnaire, ChatGLM-6B stands out for its ease of integration into existing systems, offering a seamless setup for developers and researchers alike.